Dingo-BNS Paper Published in Nature

I’m happy to share that the paper “Real-time inference for binary neutron star mergers using machine learning” has been published in Nature.

In this work, led by Max Dax, we use neural posterior estimation to enable one-second inference for binary neutron stars (BNSs). We obtain complete inference results (including sky localization, orientation, component masses and spins) without making any approximations. Fast inference like this is crucial for multimessenger astronomy, as it enables telescopes to find BNS mergers in the sky as early as possible—even before the merger takes place.

BNS inference is hard because of the high data dimensionality of the signal. Signals can be hundreds of seconds long and consist of hundreds of orbits. For three interferometers, for instance, we can have \(3 \times (128~\mathrm{s}) \times (4096~\mathrm{Hz}) \sim 10^6\) sample points (compared to \(\sim 10^5\) for a black hole binary).

We employ two standard techniques from GW data analysis to simplify the data:

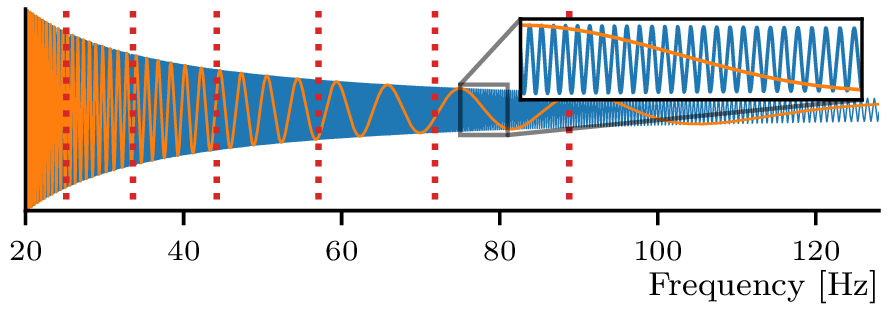

- Heterodyning. We take advantage of the known chirping nature of BNS waveforms to factor out the bulk of the oscillations. We know from general relativity that the phase of the waveform, in frequency domain, goes to leading order as \[\varphi_\mathcal M = \frac{3}{128}\left(\frac{\pi G\mathcal M f}{c^3}\right)^{-5/3},\] where \(\mathcal M = (m_1 m_2)^{3/5}/(m_1+m_2)^{1/5}\) is the chirp mass of the system, \(f\) the frequency, and \(c\) and \(G\) the speed of light and gravitational constant, respectively. By multiplying the data by \(e^{i\varphi_\mathcal M}\) we effectively cancel out most of the oscillations.

- Multi-banding. We use a coarser resolution at higher frequencies, enabling greater compression of the data for input to the neural network.

Together, heterodyning and multibanding greatly simplify the data and reduce its dimensionality. However, for heterodyning to work, the chirp mass \(\mathcal M\) in the formula above has to be close to the true chirp mass of the merger. But the chirp mass itself is unknown—and one of the parameters we aim to infer! We cannot simply choose an approximate \(\mathcal M\) and heterodyne in the same way across the entire prior volume.

Our solution is to use a new technique called prior conditioning to build networks with narrow but tunable \(\mathcal M\)-priors. On each of these constrained priors, we can factor out the phase: \[ \theta \sim q(\theta \mid e^{i\varphi_{\tilde{\mathcal M}}} \cdot d, \tilde{\mathcal M}), \] where \(q\) is our neural density estimator and \(\tilde{\mathcal M}\) defines its narrow prior. In other words, we factor out the chirp, and we pass \(\tilde{\mathcal M}\) to the network to tell it how we transformed the data. We can quickly select the best \(\tilde{\mathcal M}\) by checking importance sampling efficiency.

The combined effect of heterodyning and multi-banding reduces data dimensionality by a factor of \(\sim 100\), and enables inference (including importance sampling) in about 1 second. Our localization constraints are also about 30% better than existing low-latency methods, which make additional approximations. Finally, we show that by masking out higher frequencies, we can perform inference on partial data, before the merger occurs. For next-generation observatories, this could mean skymaps many minutes before merger, enabling telescopes to search for pre-merger electromagnetic counterparts.

The main limitation in this work is that the simple heterodyning formula above is limited to the \((\ell, m) = (2, 2)\) angular radiation multipole. Hence the mass ratio has to be close to equal, ruling out its use on neutron star-black hole binaries.

For more on our simulation-based inference program, see the research page. See also the great News and Views kindly written by Michael Williams.